Emerging Tech Signals (Pre-Mainstream)

Import AI 453: Breaking AI agents; MirrorCode; and ten views on gradual disempowerment (Jack-Clark.Net)

Summary: Epoch AI and METR’s MirrorCode benchmark demonstrates that Claude Opus 4.6 can autonomously reimplement a 16,000-line Go bioinformatics toolkit, a task estimated to take a human engineer 2–17 weeks. This suggests AI coding capabilities are advancing faster than anticipated, particularly for tasks with verifiable outputs. Concurrently, a Google DeepMind paper outlines six genres of attack against AI agents, highlighting that securing autonomous systems will require ecosystem-level, not just model-level, interventions.

Why it matters: These developments signal a shift in the practical timeline for AI automating complex knowledge work and expose the emergent, systemic security challenges of deploying autonomous agents.

Context: These findings arrive amid a pattern of forecasters like Ryan Greenblatt and Ajeya Cotra accelerating their timelines for AI R&D automation, based on observed performance leaps in ‘easy-to-verify’ tasks.

"Claude Opus 4.6 successfully reimplemented gotree — a bioinformatics toolkit with ~16,000 lines of Go and 40+ commands. We guess this same task would take a human engineer without AI assistance 2–17 weeks." — JACK-CLARK.NET

Commentary: MirrorCode validates that frontier models can now execute multi-week engineering projects autonomously when given a clear specification, moving the debate from capability to reliability and oversight. The agent attack taxonomy reveals that as AI moves from chat to action, the attack surface expands from model prompts to the entire digital environment, necessitating new standards and legal frameworks.

Date: Mon, 13 Apr 2026 10:02:22 +0000

URL: https://jack-clark.net/2026/04/13/import-ai-453-breaking-ai-agents-mirrorcode-and-ten-views-on-gradual-disempowerment/

AI Sentiment Score: Negative (50%)

AI Credibility Score: 10.0/10 — High

Scores and text generated by AI analysis of the source article indicated.“Giant superatoms” could finally solve quantum computing’s biggest problem (Sciencedaily)

Summary: Chalmers University researchers propose a novel quantum system architecture combining ‘giant atoms’ and ‘superatoms’ into ‘giant superatoms.’ This theoretical design aims to reduce decoherence by enabling non-local interaction with the environment and to facilitate entanglement across distances by treating multiple qubits as a single collective unit. The work, published in Physical Review Letters, suggests a path toward scalable, hybrid-compatible quantum hardware that could simplify control circuitry.

Why it matters: For quantum computing’s path to scale, the primary obstacle is decoherence and the engineering complexity of controlling entangled states; this theoretical advance proposes a new physical architecture that could address both, potentially altering the hardware roadmap.

Context: The field has long grappled with the trade-off between qubit isolation for coherence and interconnection for computation. Giant atoms (Chalmers’ own prior work) offered coherence via self-interference, while superatoms offered collective behavior; merging them is a logical but non-trivial theoretical synthesis.

""Giant superatoms open the door to entirely new capabilities, giving us a powerful new toolbox. They allow us to control quantum information and create entanglement in ways that were previously extremely difficult, or even impossible," says Janine Splettstoesser, Professor of Applied Quantum Physics at Chalmers and co-author of the study." — SCIENCEDAILY

Commentary: This is a materials science and quantum engineering signal: if experimentally realized, it shifts the unit of scalability from individual qubits to collective ‘superatom’ modules, potentially simplifying the control problem. The emphasis on hybrid compatibility suggests it’s aimed at integration with existing platforms (e.g., superconducting circuits, ion traps), not as a standalone winner-take-all technology. Watch for follow-up experimental papers from this group and competitors; the real test is whether the predicted decoherence reduction and entanglement transfer hold under laboratory noise.

Date: Mon, 13 Apr 2026 08:38:46 EDT

URL: https://www.sciencedaily.com/releases/2026/04/260413043155.htm

AI Sentiment Score: Negative (57%)

AI Credibility Score: 10.0/10 — High

Scores and text generated by AI analysis of the source article indicated.This simple change stops robot swarms from getting stuck (Sciencedaily)

Summary: Harvard SEAS researchers have identified a counterintuitive method for preventing congestion in dense robot swarms: introducing a controlled amount of randomness into individual movement paths. Through simulations and physical experiments, they demonstrated that a ‘sweet spot’ of noise allows robots to slip past each other, avoiding gridlock that occurs with perfectly deterministic motion. This finding shifts the design paradigm for multi-agent systems from purely optimized, straight-line trajectories to incorporating stochastic elements for collective efficiency.

Why it matters: This directly challenges a core optimization principle in robotics and logistics, suggesting that introducing local inefficiency can yield global gains in throughput for warehouse automation, last-mile delivery, and disaster response fleets.

Context: The field of swarm robotics has long grappled with the scalability problem of interference, where adding more agents eventually degrades performance. Current solutions often rely on complex coordination algorithms or centralized control.

"This might be counterintuitive, because how could randomness make things easier to work with?" said Liu. "But in this case, when you have a lot of randomness, it becomes possible to take averages — average distances, average times, average behaviors. This makes it a lot easier to make predictions." — SCIENCEDAILY

Commentary: The work formalizes a principle from statistical physics—trading deterministic complexity for stochastic predictability—into an engineering toolkit. It lowers the computational burden for swarm coordination, moving the bottleneck from planning to simple actuator control, which could accelerate adoption in cost-sensitive industrial applications. The mathematical models linking density to noise also provide a new lever for system designers to tune for peak flow rather than peak individual speed.

Date: Wed, 15 Apr 2026 03:45:51 EDT

URL: https://www.sciencedaily.com/releases/2026/04/260414075639.htm

AI Sentiment Score: Negative (62%)

AI Credibility Score: 10.0/10 — High

Scores and text generated by AI analysis of the source article indicated.Quantum AI just got shockingly good at predicting chaos (Sciencedaily)

Summary: A UCL-led team has demonstrated a hybrid quantum-classical method for predicting chaotic systems, achieving a 20% accuracy improvement and a hundredfold memory reduction over conventional AI models. The approach uses a quantum computer to identify invariant statistical patterns in data, which then guide a classical AI model’s training. This workflow, executed on a 20-qubit IQM system, isolates the quantum processing to a single step, mitigating current hardware noise limitations.

Why it matters: This demonstrates a near-term, practical quantum advantage for a critical class of simulation problems, shifting the focus from abstract supremacy benchmarks to specific, high-value workflows in climate, energy, and biomedical modeling.

Context: The search for practical quantum advantage has largely stalled on error correction and algorithm generality; this work pivots to a hybrid, ‘quantum-informed’ paradigm where quantum processing extracts compact features for classical models.

"Our new method appears to demonstrate ‘quantum advantage’ in a practical way — that is, the quantum computer outperforms what is possible through classical computing alone. These findings could inspire the development of novel classical approaches that achieve even higher accuracy, though they would likely lack the remarkable data compression and parameter efficiency offered by our method." — SCIENCEDAILY

Commentary: The operational model—quantum as a pre-processor for feature extraction—creates a viable path for near-term quantum computing in national labs and industrial R&D without waiting for fault tolerance. It also pressures classical algorithm developers to match its efficiency, potentially bifurcating simulation toolchains into quantum-assisted and purely classical branches. For fields like climate and fluid dynamics, this could accelerate the shift from ensemble modeling to higher-fidelity, longer-horizon predictions within existing HPC budgets.

Date: Fri, 17 Apr 2026 23:51:09 EDT

URL: https://www.sciencedaily.com/releases/2026/04/260417224455.htm

AI Sentiment Score: Positive (80%)

AI Credibility Score: 10.0/10 — High

Scores and text generated by AI analysis of the source article indicated.Gemini Robotics-ER 1.6: Powering real-world robotics tasks through enhanced embodied reasoning (Deepmind.Google)

Summary: DeepMind has released Gemini Robotics-ER 1.6, an upgrade to its embodied reasoning model designed as the ‘high-level reasoning’ layer for physical agents. It emphasizes enhanced spatial reasoning, multi-view understanding, and a new instrument-reading capability developed with Boston Dynamics for tasks like gauge monitoring. The model acts as an agentic controller, natively calling tools like search or VLA models, and is positioned as its safest robotics model to date. It is now available via the Gemini API.

Why it matters: This signals a shift from isolated robotic skills to integrated, reasoning-driven autonomy, with immediate implications for industrial inspection and logistics where multi-sensor fusion and safety-critical decision-making are paramount.

Context: The push for ’embodied AI’ has largely focused on low-level control; this release represents a concerted effort by a major lab to productize high-level, tool-using reasoning as a service for robotics, directly integrating with commercial platforms like Boston Dynamics Spot.

"Gemini Robotics-ER 1.6 achieves its highly accurate instrument readings by using agentic vision, which combines visual reasoning with code execution. The model takes intermediate steps: first zooming into an image to get a better read of small details in a gauge, then using pointing and code execution to estimate proportions and intervals and get an accurate reading, and ultimately applying its world knowledge to interpret meaning." — DEEPMIND.GOOGLE

Commentary: The explicit framing of ‘agentic vision’—combining perception, code, and tool calls—formalizes a stack architecture for robotics, moving the intelligence bottleneck from physical actuation to planning and interpretation. The direct partnership with Boston Dynamics and the solicitation of failure-mode images from developers indicates a pragmatic, deployment-focused iteration cycle aimed at hardening these capabilities for real-world variance, rather than purely academic benchmarks.

Date: Mon, 13 Apr 2026 15:52:13 +0000

URL: https://deepmind.google/blog/gemini-robotics-er-1-6/

AI Sentiment Score: Neutral (33%)

AI Credibility Score: 10.0/10 — High

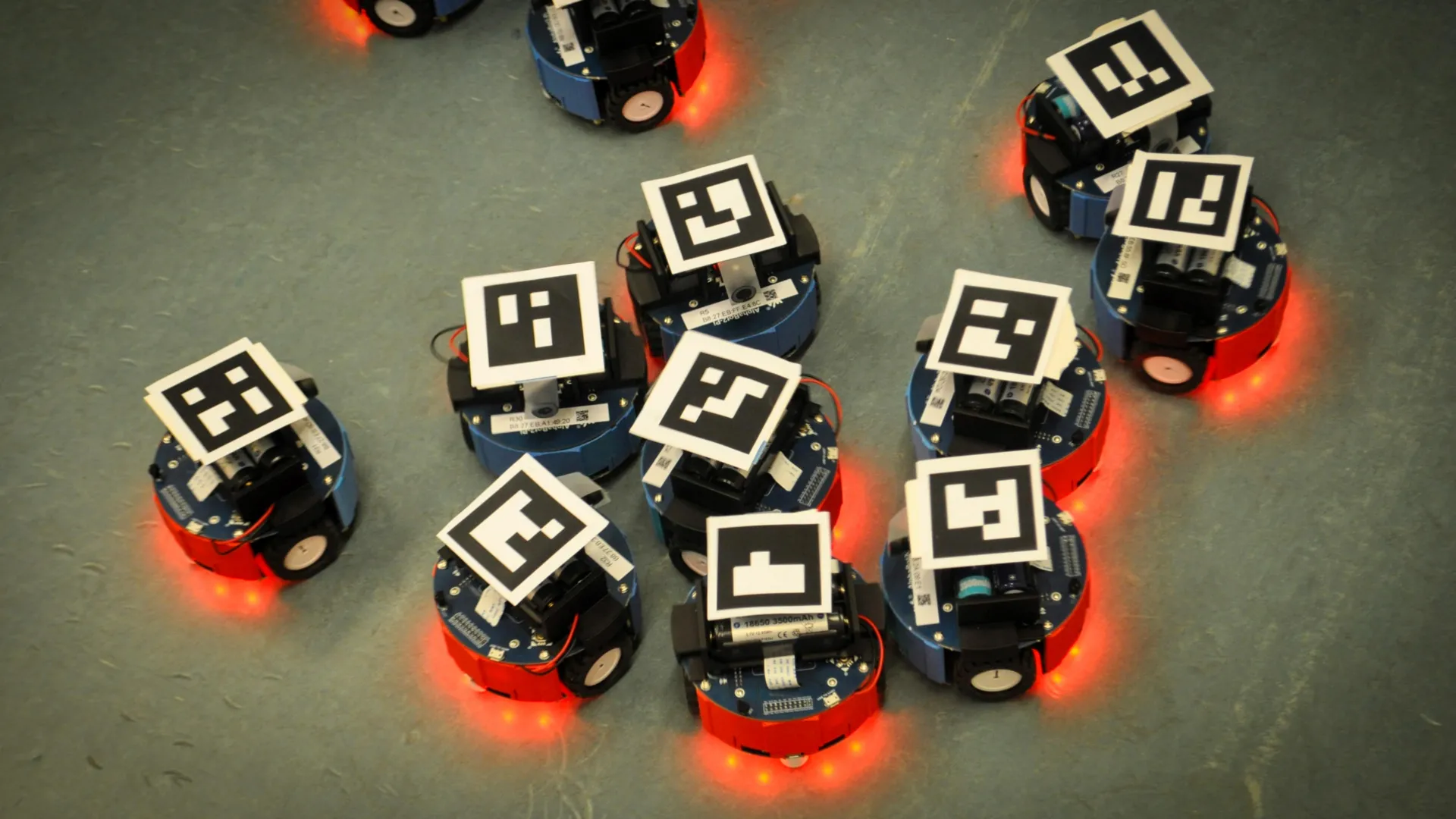

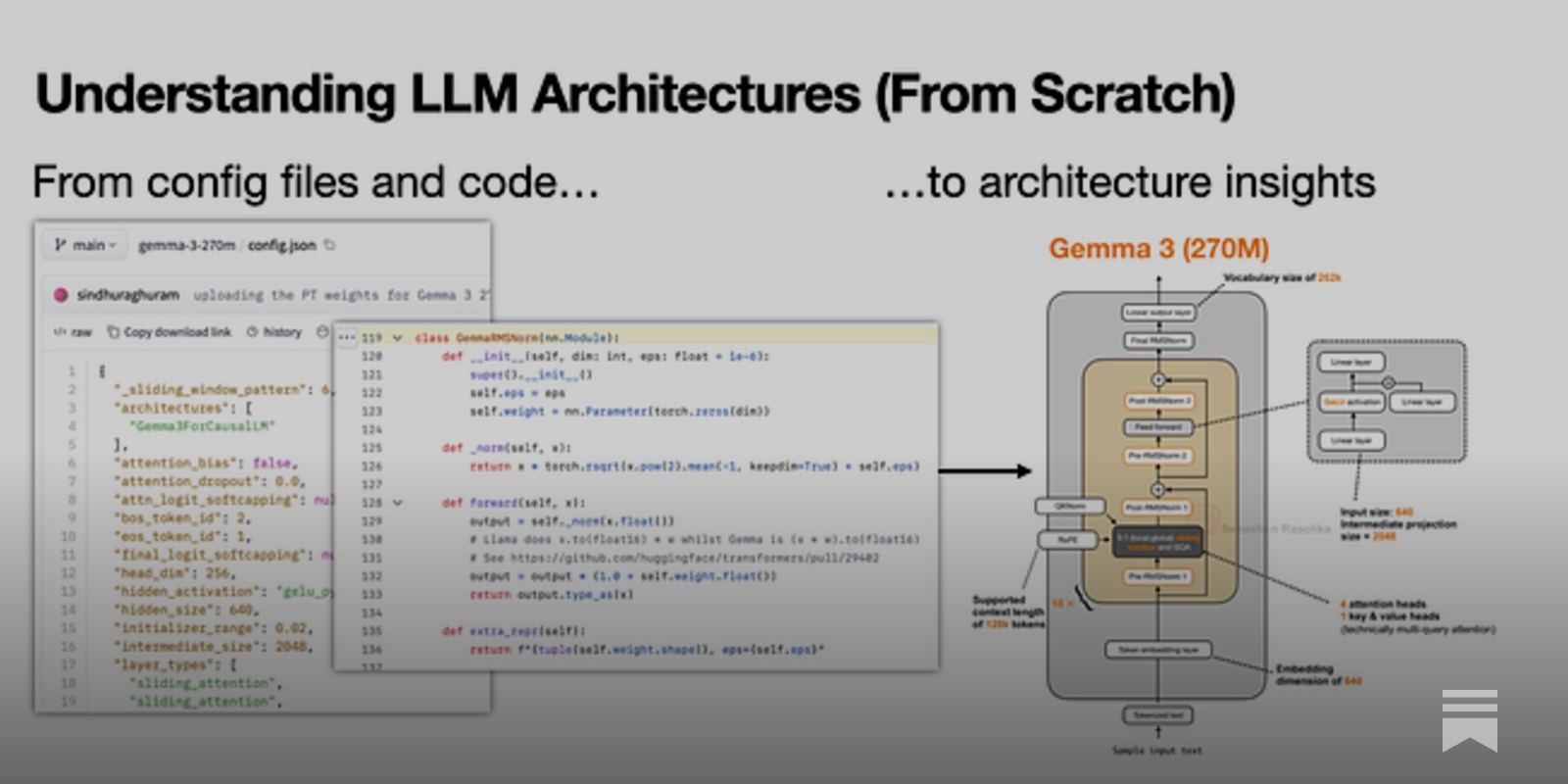

Scores and text generated by AI analysis of the source article indicated.My Workflow for Understanding LLM Architectures (Magazine.Sebastianraschka)

Summary: Sebastian Raschka details his manual workflow for reverse-engineering the architectures of open-weight large language models. He notes that official technical reports have become less detailed, forcing reliance on inspecting config files and reference implementations from repositories like Hugging Face. The process is intentionally manual to facilitate deep learning, contrasting with automated approaches.

Why it matters: This reveals the growing opacity of model documentation from industry labs and establishes a necessary, reproducible methodology for independent technical evaluation in an era of proprietary frontier models.

Context: As major labs release increasingly sparse technical reports for open-weight models, the burden of architectural verification shifts to the community.

"The short version is that I usually start with the official technical reports, but these days, papers are often less detailed than they used to be, especially for most open-weight models from industry labs." — MAGAZINE.SEBASTIANRASCHKA

Commentary: Raschka’s workflow formalizes a critical audit practice for the open-weight ecosystem, turning model hubs into de facto specification sources. It underscores a divergence where ‘open’ weights do not suggest transparent design, elevating the value of reverse-engineering skills. This methodology will shape how researchers, auditors, and downstream integrators assess model capabilities and constraints, independent of marketing narratives.

Date: Sat, 18 Apr 2026 11:24:36 GMT

URL: https://magazine.sebastianraschka.com/p/workflow-for-understanding-llms

AI Sentiment Score: Negative (80%)

AI Credibility Score: 7.0/10 — Medium

Scores and text generated by AI analysis of the source article indicated.Artificial neurons successfully communicate with living brain cells (Sciencedaily)

Summary: Northwestern University engineers have demonstrated printed artificial neurons capable of bidirectional communication with living brain tissue. Using aerosol-jet-printed molybdenum disulfide and graphene inks on flexible substrates, they created devices that generate electrical spikes matching the timing and shape of biological neural signals, successfully activating circuits in mouse cerebellum slices. This represents a shift from mimicking neural behavior to achieving functional interoperability with the nervous system.

Why it matters: This signals a convergence point for neuroprosthetics and neuromorphic computing, moving from theoretical compatibility to demonstrable, low-cost hardware that can directly interface with biological systems.

Context: Previous artificial neuron efforts struggled with signal fidelity—organic materials were too slow, metal oxides too fast—limiting direct biological integration. The field has sought materials and manufacturing methods that bridge the structural and dynamic gap between rigid silicon and soft, heterogeneous neural tissue.

"We are within a temporal range that was not previously demonstrated for artificial neurons. You can see the living neurons respond to our artificial neuron. So, we’ve demonstrated signals that are not only the right timescale but also the right spike shape to interact directly with living neurons." — SCIENCEDAILY

Commentary: The demonstration of direct, reliable activation of biological neural circuits shifts the neurointerface field from stimulation to communication. For neuromorphic computing, the ability to generate complex spiking patterns with single printed devices, rather than large networks, alters the cost and efficiency curve for brain-inspired hardware. The use of a partially decomposed polymer to form a conductive filament is a clever materials hack that turns a manufacturing flaw into a functional feature, suggesting a path for other printable neuromorphic systems. This work lowers the barrier for prototyping bidirectional brain-machine interfaces and could accelerate the design cycle for next-generation neuroprosthetics.

Date: Sat, 18 Apr 2026 03:32:36 EDT

URL: https://www.sciencedaily.com/releases/2026/04/260417225020.htm

AI Sentiment Score: Negative (83%)

AI Credibility Score: 10.0/10 — High

Scores and text generated by AI analysis of the source article indicated.Gemini 3.1 Flash TTS: the next generation of expressive AI speech (Deepmind.Google)

Summary: Google DeepMind has launched Gemini 3.1 Flash TTS, a text-to-speech model emphasizing improved controllability and expressivity via natural-language ‘audio tags’ and scene-direction parameters. It is positioned as a high-quality, cost-effective option on third-party benchmarks and includes native multi-speaker dialogue, support for over 70 languages, and SynthID watermarking. The model is available in preview for developers and enterprises via Google’s API platforms and is integrated into Google Vids for Workspace users.

Why it matters: For developers and enterprises building voice interfaces and synthetic media, this release lowers the barrier to creating nuanced, character-driven audio at scale, while watermarking addresses a key trust and safety concern.

Context: The TTS market is moving beyond raw fidelity toward creative control and workflow integration, with major platforms competing on developer tooling and multi-modal application support.

"Gemini 3.1 Flash TTS: the next generation of expressive AI speech Today, we’re introducing Gemini 3.1 Flash TTS, the latest text-to-speech model that delivers improved controllability, expressivity and quality — empowering developers,." — DEEPMIND.GOOGLE

Commentary: The focus on a ‘director’s chair’ developer experience and cost-quality positioning targets the prosumer and enterprise content creation pipeline directly, potentially accelerating adoption in gaming, advertising, and e-learning. Embedding watermarking by default signals an attempt to pre-empt regulatory friction, making the model more palatable for scaled deployment despite the inherent risks of synthetic media.

Date: Wed, 15 Apr 2026 16:03:19 +0000

URL: https://deepmind.google/blog/gemini-3-1-flash-tts-the-next-generation-of-expressive-ai-speech/

AI Sentiment Score: Negative (62%)

AI Credibility Score: 10.0/10 — High

Scores and text generated by AI analysis of the source article indicated.Post ID: 7a7c0312